Factsheet

Principal Artist:

John Capogna

Based in San Francisco, CA

Genres:

Adventure Game, Art Game, Narrative-Driven, Interactive Fiction, Walking Simulator

Release date:

Q3 2020

Website:

johncapogna.com

Contact:

john.s.capogna@gmail.com

Social:

Instagram

Overview

Nomad is a story exploding, a meta-narrative set in the wilderness and cities of the modern day Oregon Trail.

Description

Nomad follows the story of Kid, a lonely narrator who has opted out of society and who is making a video game to connect with the society she has left behind. Hers is a video game travelogue that explores isolation and the paradox of longing to escape society while simultaneously striving to build authentic human connections through creativity and technology. The project assets will be gathered on the road using photogrammetry and other geographic information technology as the artist, John Capogna, and under the guise of Kid, retraces the historic American route of the Oregon Trail from Independence Missouri, through the Great Plains, Rocky Mountains, and on into the Pacific Northwest.

Using 360 video and photography, photogrammetry, LIDAR and other emerging technology, John will capture environments and translate them into virtual spaces as the setting for a narrative that comments on the intersection of American mobility, cultural mythology, and personal isolation.

Nomad critically examines our collective regard for place, mobility, and the allure of digital, physical, and personal frontiers. Additionally, Nomad invites a discussion on the blurring of distinction between physical and digital space and the artistic endeavor to re-convey the geographic world through technology. Parallel to this examination is the tension between one’s role and responsibilities within society and the individualist urge to leave these roles behind and explore the unknown. This urge for exploration is embodied in the metaphor of the Oregon Trail, which is both a physical and historical space, and a cultural and technological one –the American frontier mythology and the video game that celebrated and satirized it. Romanticized in literature and film, this American concept of leaving an old world to conquer the unknown is echoed in the goal structure of video games which are organized into levels of increasing, and unknown, challenge.

Nomad Trailer

Current gameplay trailer (October 2018)

Narrative

The proposed narrative is broken into three distinct Acts. To view a narrative outline of each act and chapter, as well as moodboards and sample scripts, please visit this link. The page is password protected. If you do not have access, please reach out to me at this email address.

Technical Research / Prototyping

The remainder of this page documents our research and experiments with a variety of technologies. First, we explored the use of 360 degree cameras to create photographic and videographic spherical environments in the Unity Game Engine. We also explored the various first person navigation techniques in-game.

Following that, we document the process for usng DSLR cameras to capture objects photogrammetrically in the field and convert them into game objects for our environments.

Finally, we look to the future for proposed experiments using 360 degree images and LIDAR technology to create a technique for mapping pixel color data onto volumentric data for use in our environments. This has yet to be explored in more depth, but it is the next area of research.

Ultimately, the goal is to create a standard technique or set of techniques, and master the associated workflows, for capturing place while on the real-life journey for later use in the fictional game.

In the second half of 2017, as part of a creative incubator at The Gray Area Foundation, I created a narrative prototype that attempted to weave many of the techniques below into a hybrid digital / videographic experience. I tried to blend reality and video games, giving our protagonist Kid a voice by which she could talk to the player from within the game. Below is the gameplay footage from this prototype:

360 Degree

Research / Evaluative Process for 360 Degree Cameras

Initial tests with Ricoh Theta Gen 1 model. With low resolution and without sufficient image stabilization, it would not work for video gameplay.

Videos output from Ricoh are dual fisheye. Using software, we can convert to equirectangular.

Image stabilization with mini-handheld gimbal. The resulting video is not stable enough.

Image sequence for unity level test (below). Gameplay demo is in point-and-click style.

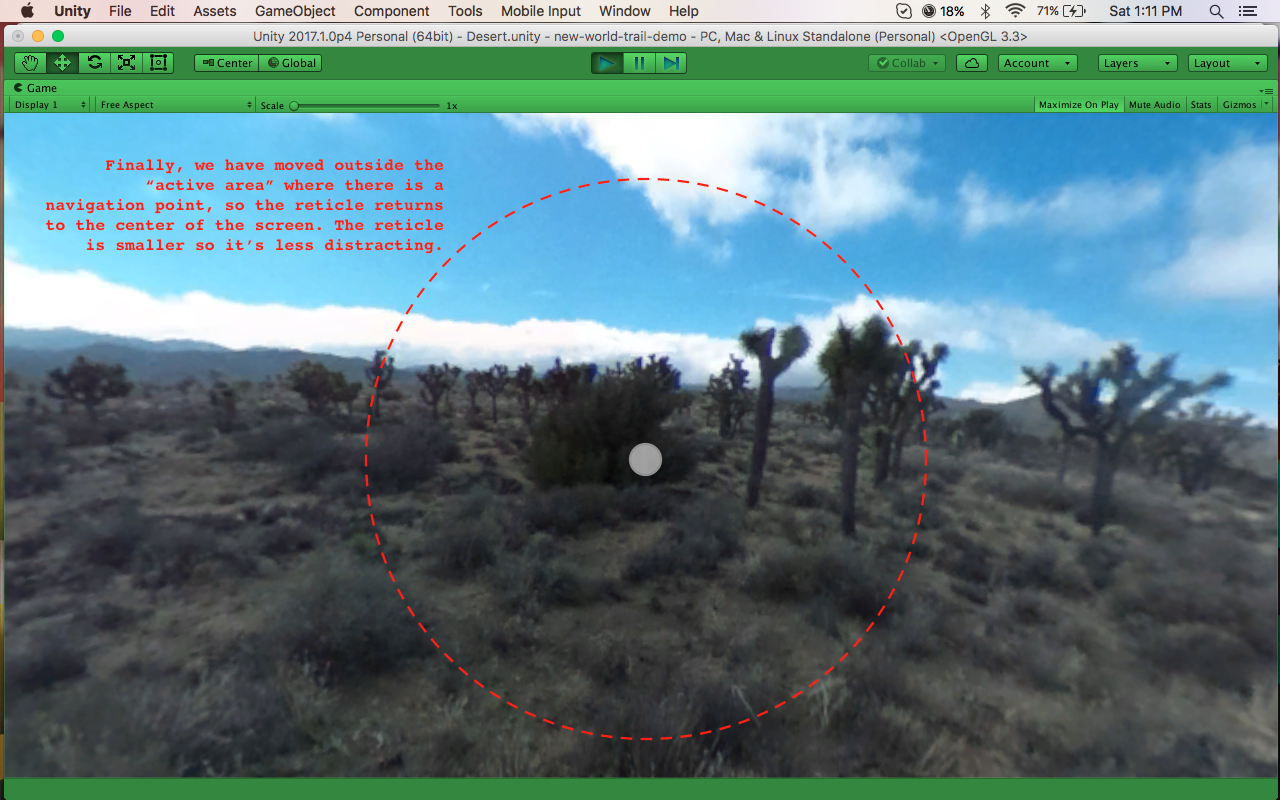

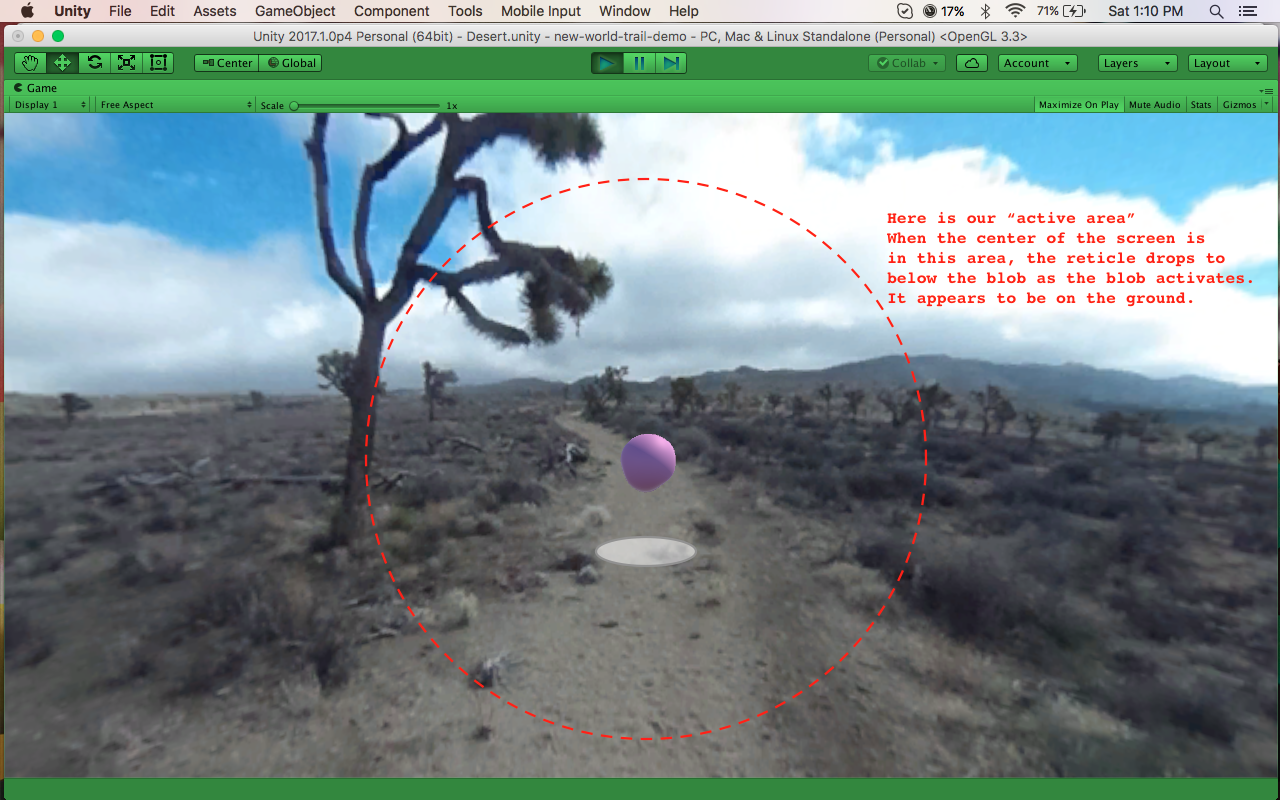

Exploration on creating a "jump" style navigation technique. There is an "active area" around the blob in which the reticle appears below it. Outside this area, the reticle returns to the center of the screen.

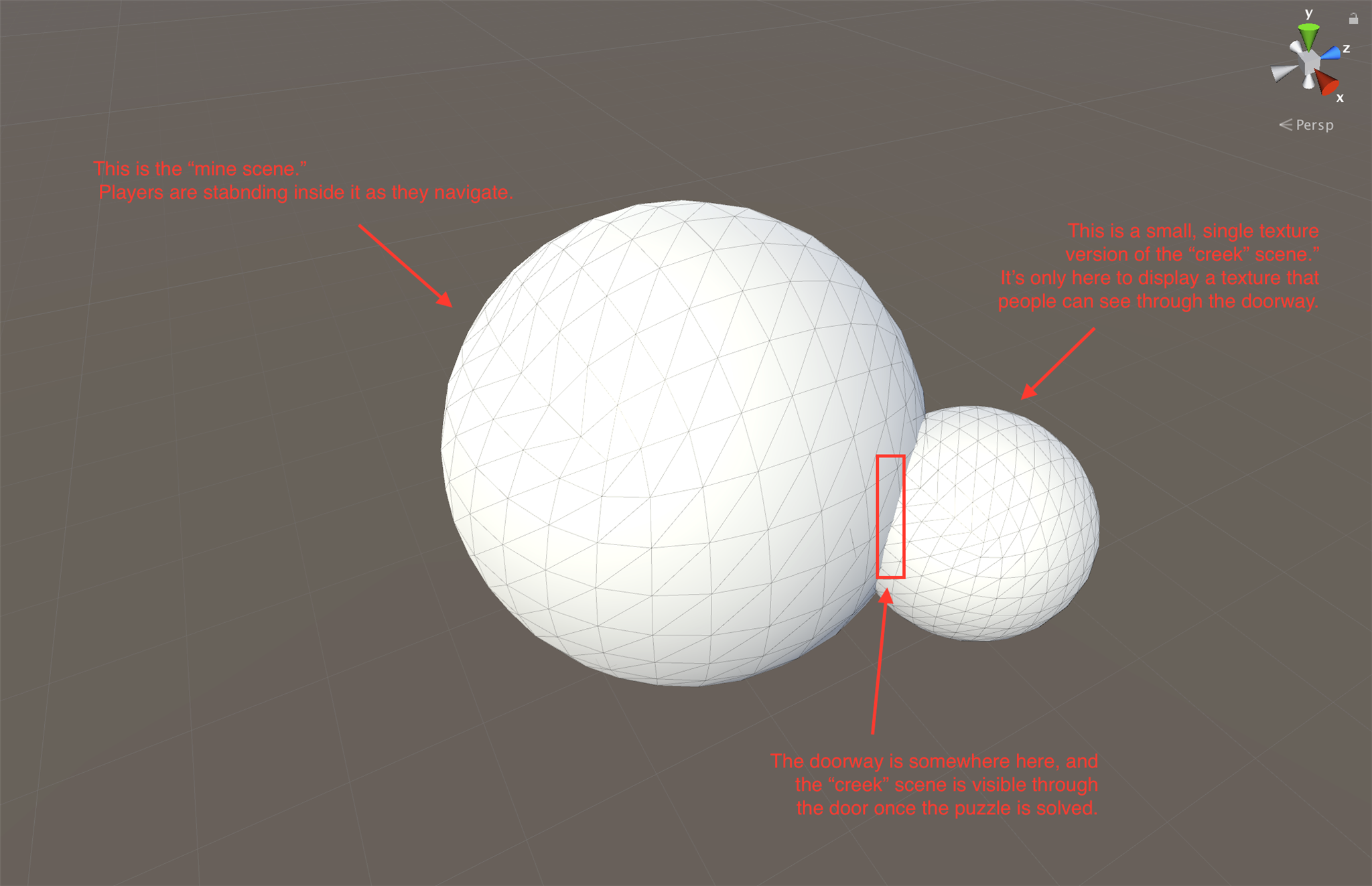

Creating a "parallax-style" effect. We isolate an area over the doorway (yellow) as a cutout. In our scene, attached to player's the main skybox, is a smaller inverted sphere with the next scene's texture on the inside so that the player can "see" into the next scene. When the player camera rotates left, we rotate the texture on the smaller sphere right, and vice versa. This creates dimensionality to the scene on the other side of the doorway.

Initial tests with Guru Gimbal stabilizer. A huge improvement in image stabilization. No video disturbance even going down stairs. New camera used is the Xiaomi Mi Sphere, which has 4K video resolution.

Additional Experiments

360 Degree Hyperlapse (sequence of images).

Timelapse test and video test for car object to translate from 2D to 3D space.

Test with Graphics FX and 360 Degree Video.

VR Post Effects

Using Mettle Skybox Suite Post FX we can create video effects in equirectangular space. This is important because the effects are preserved at the "poles" of the video where the texture becomes warped.

Results

Point And Click Navigation

360 Video Interstitial with Post FX.

Photogrammetry

Result / Technical Demo

A full render of the cabin scene. Assets captured using photoscans of environments of Mt. Baker-Snoqualmie National Forest.

ProcessGathering reference images.

A cloudy day provides the best, diffuse lighting. We attempt to capture the sharpest picture possible without any shadows or noise. For a tree, we take a series of photographs moving around the trunk in 360 degrees, taking each new photo at equidistant intervals.

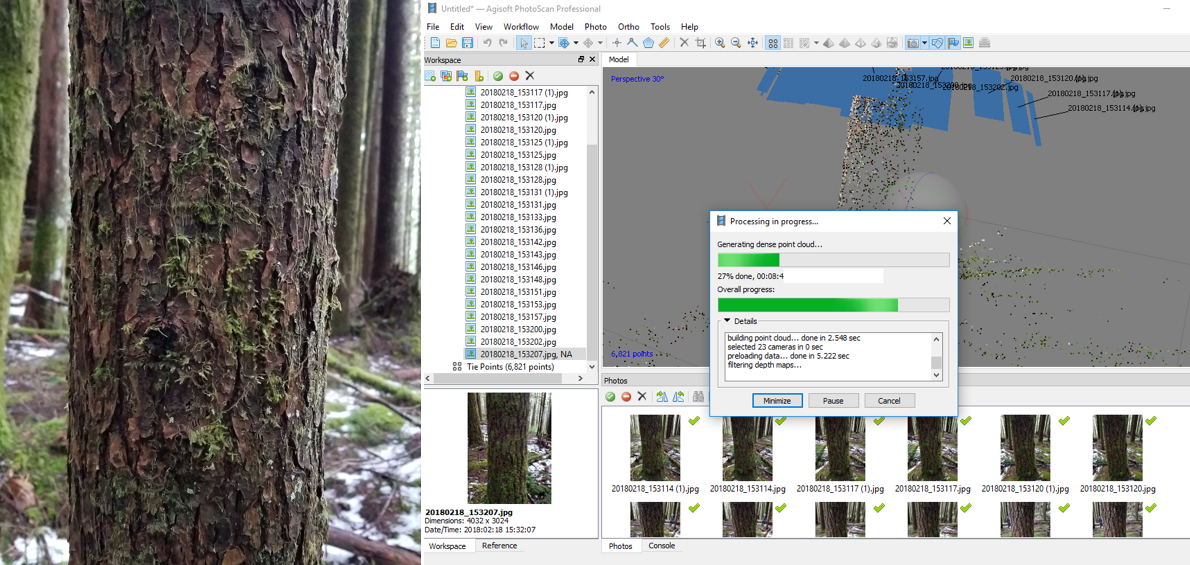

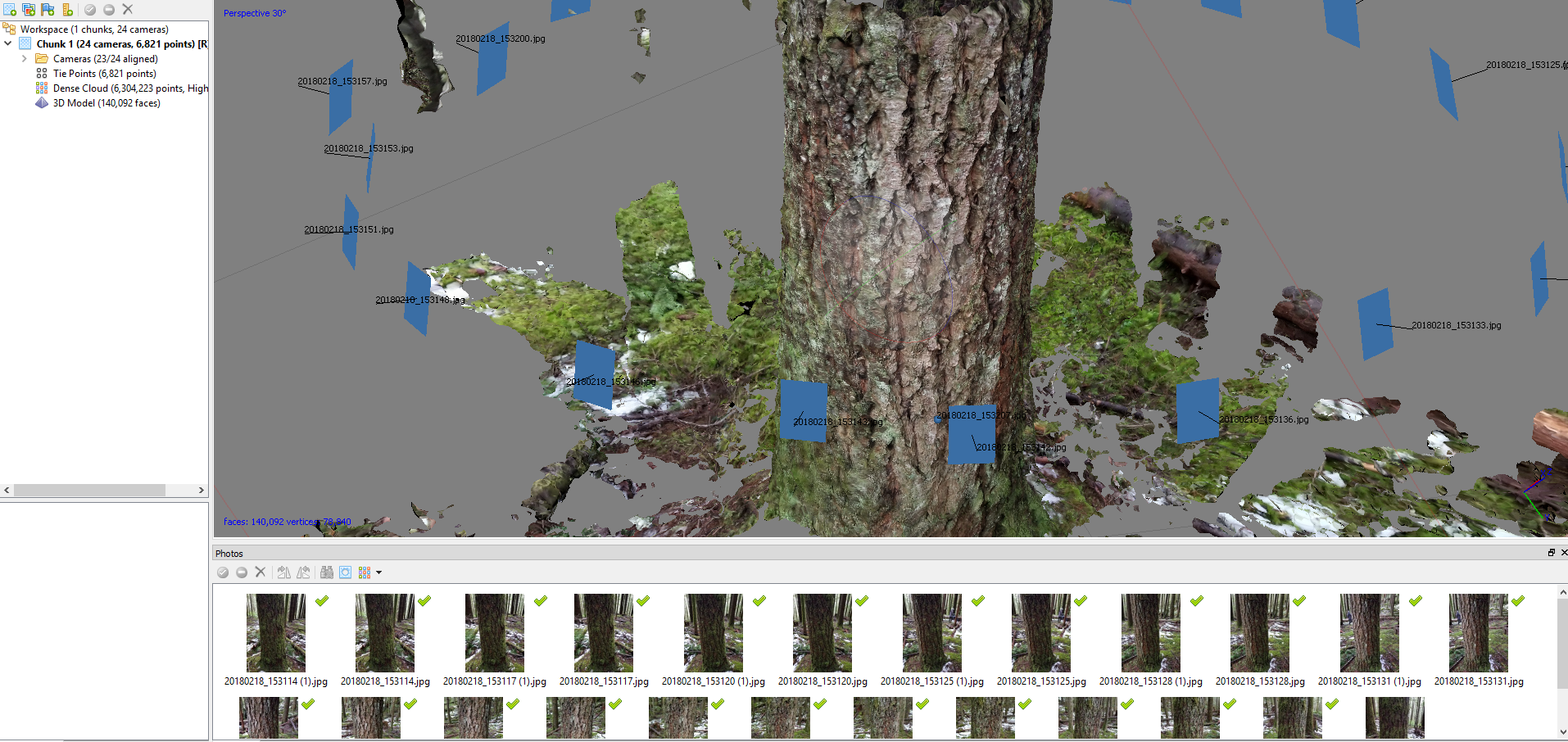

We bring the photos into Agisoft Photoscan. The software automatically aligns the images and creates a point cloud. This is used to generate the first rough mesh, and render some textures.

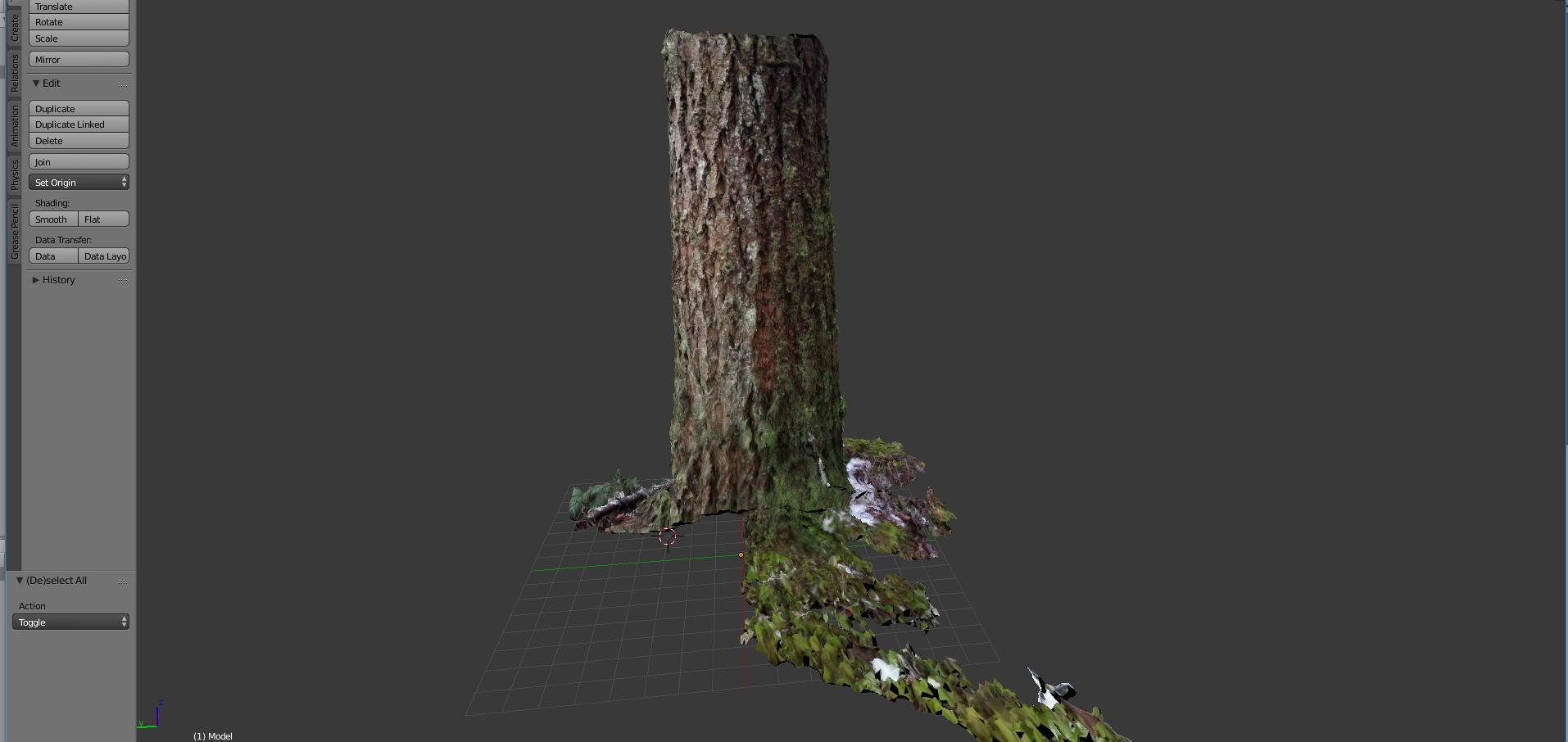

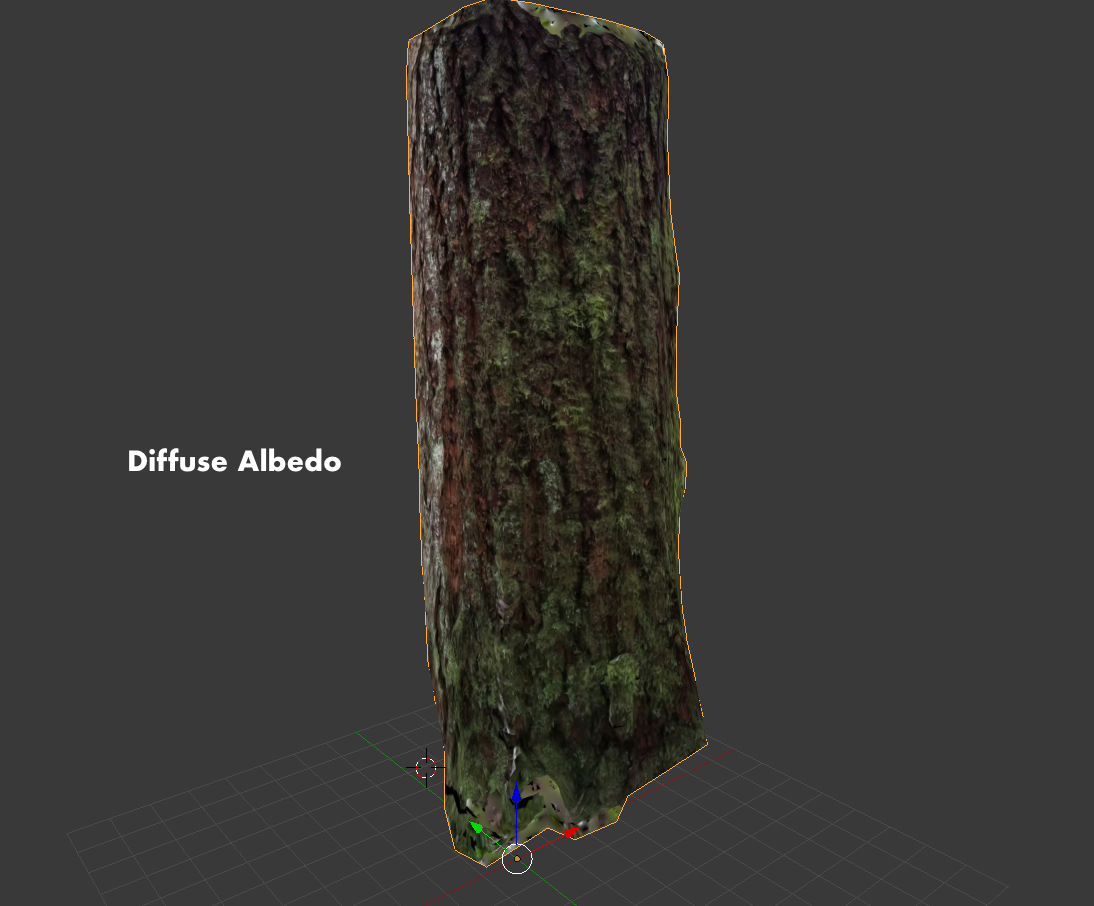

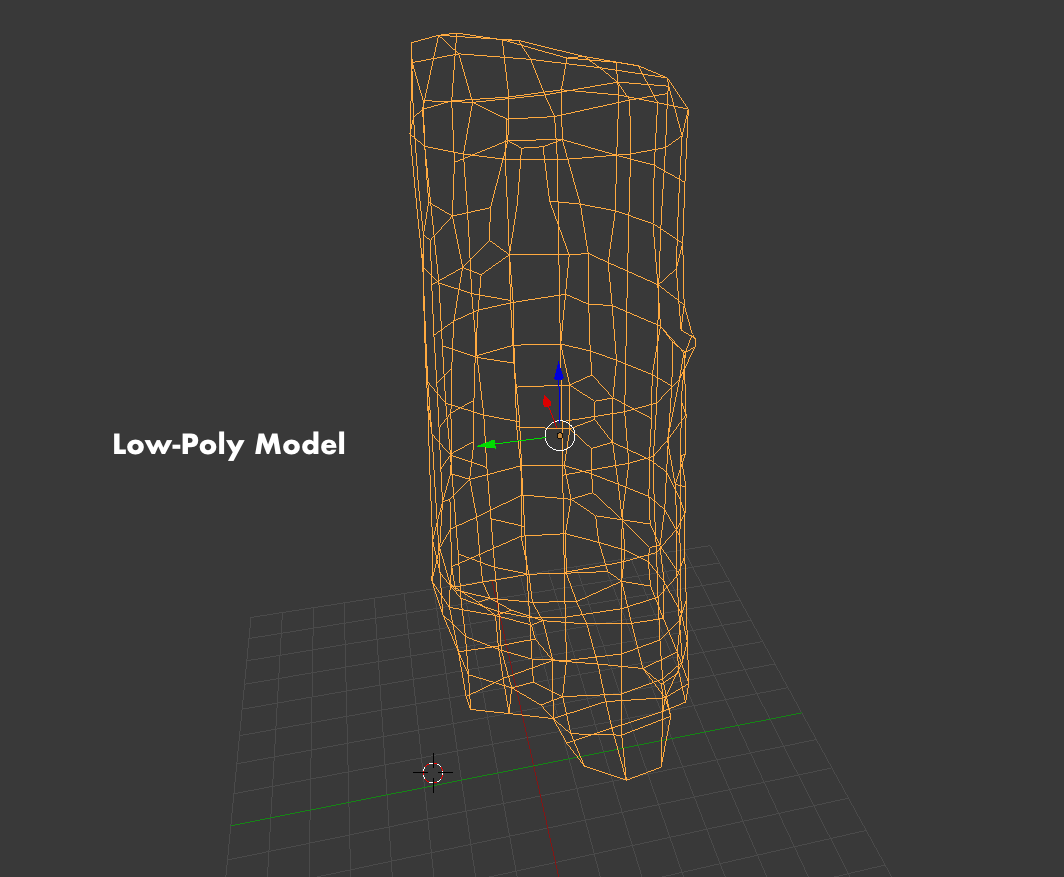

To post-process, we bring the mesh into Blender, decimating the model to reduce vertex/face count, then cleaning up the mesh and removing loose geometry, to create a low-poly remesh. As this is the final mesh, we unwrap the UV maps and render textures on to it using the highpoly photoscan as a reference.

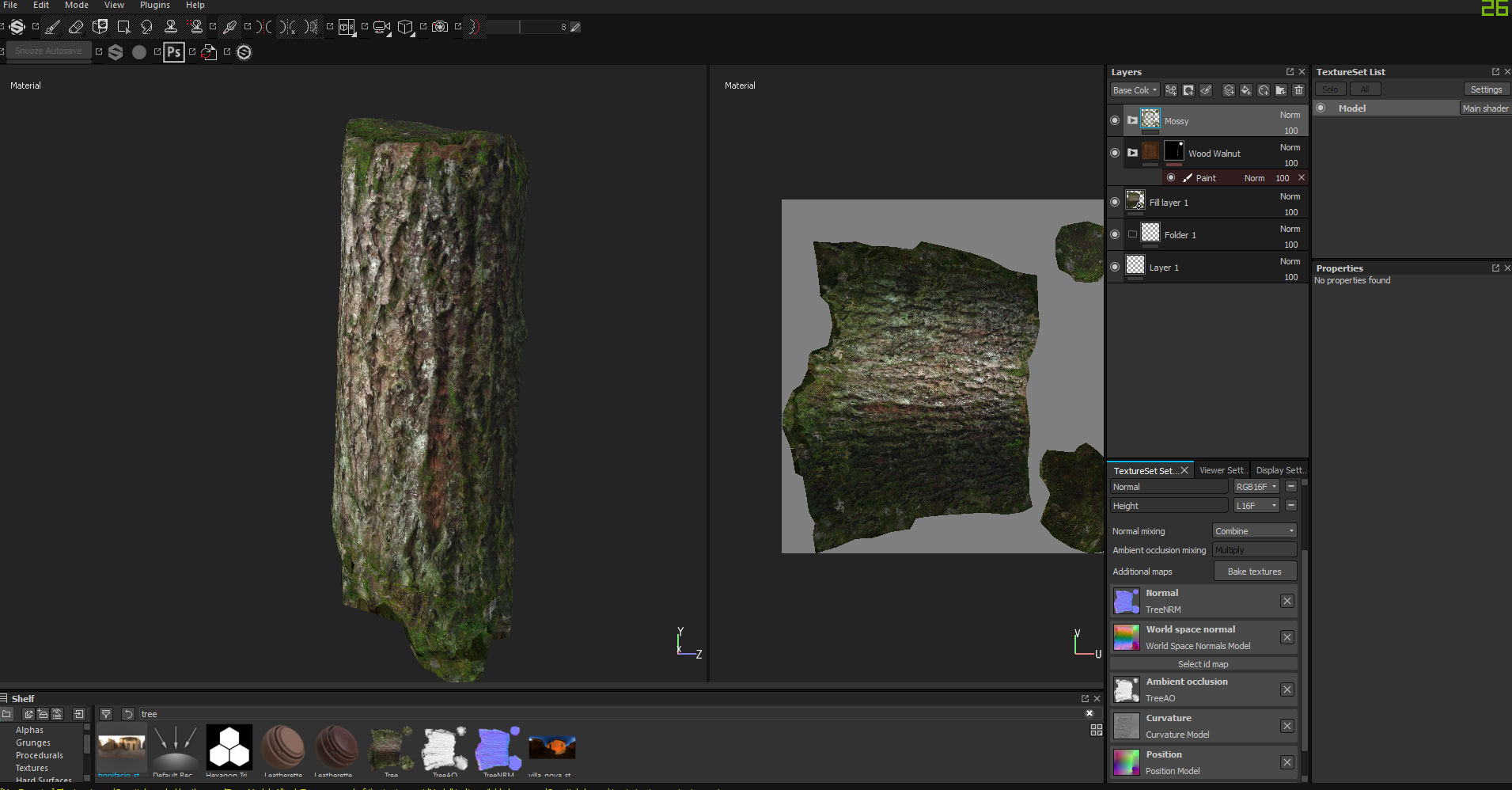

With the final mesh created and UV mapped, with a full texture set rendered, we bring everything into Substance Painter to fill any holes in the texture, add detail, as well as compost and convert the texture maps. We export the textures directly into a format Unity uses.

The final asset placed in the scene.

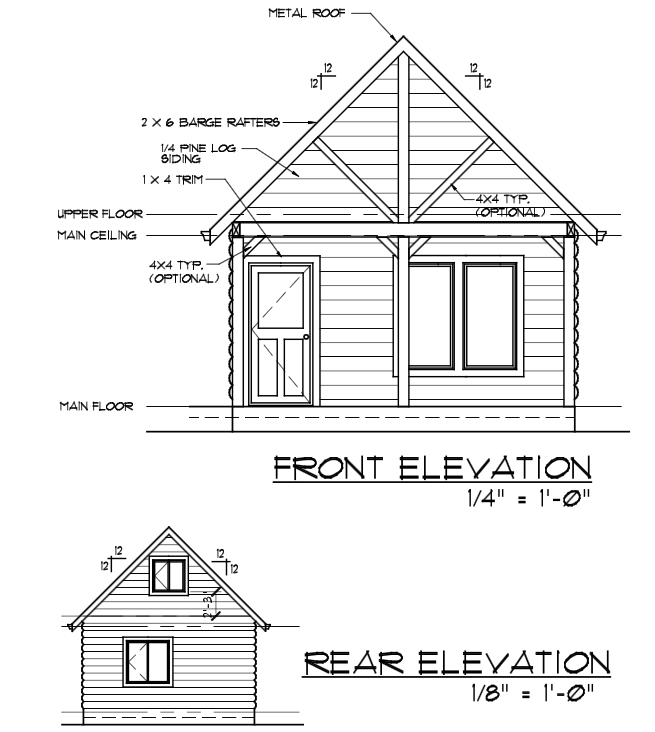

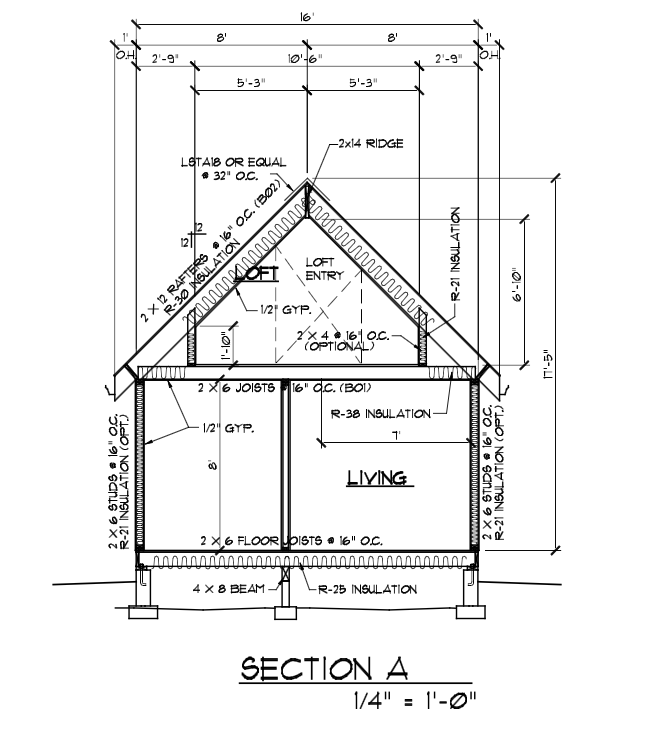

We use an actual cabin plan to create a realistic replica.

Laser Scanning

InspirationThis section is entirely theoretical and represents the next steps in my research. I would like to explore these various methods of place capture and environment representation.

Pixel manipulation on 360 degree video to create depth. Courtesy of Walkabout Worlds.

Images courtesy of Irene Alvarado. Using DJI Phantom Pro 4 and software such as Pix4D we can create assets via aerial photogrammetry. These types of images would lend themselves well to the abstract cities in later levels of Nomad.

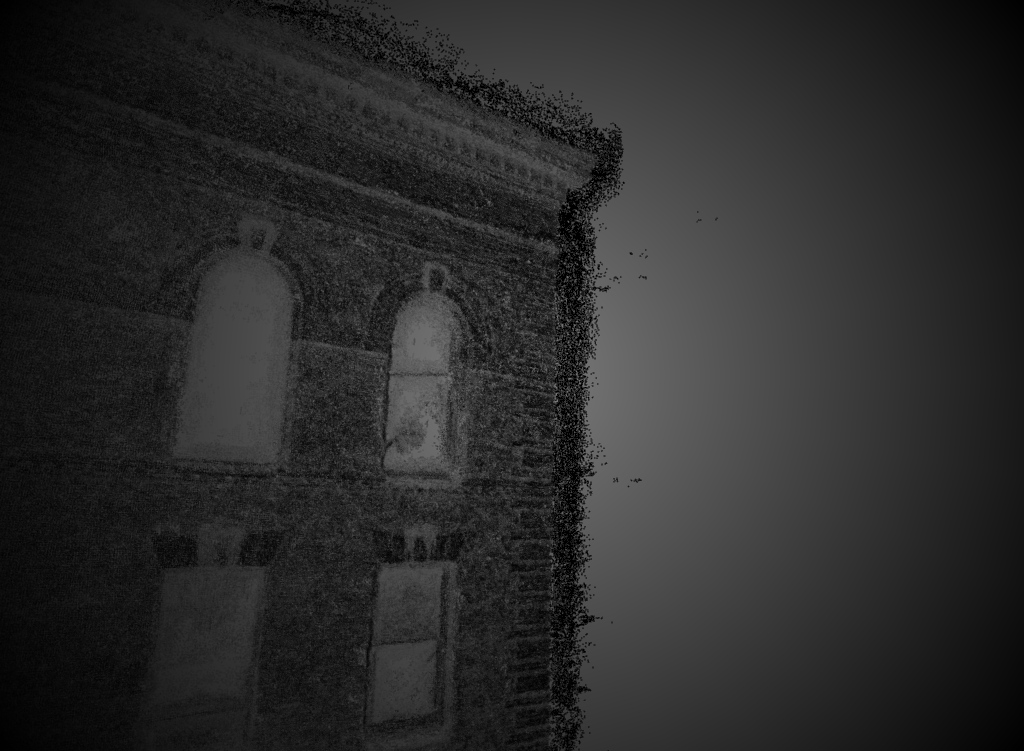

Taking this a step further, we can experiment with aerial LIDAR scanning with affordable scanning solutions such as the Scanse Sweep Sensor. The resulting point clouds could be manipulated as pixels in a game engine as we see with the following example:

With the above solutions combined, we create photogrammetric imagery with depth information. This has been done in two dimensions with examples such as Scatter's Depthkit tool. A laser scanner (in this case, a Microsoft Kinect) is lined up and calibrated with an RGBA photograph to product a depth image with color maps. These points can be manipulated at runtime to produce the following effects:

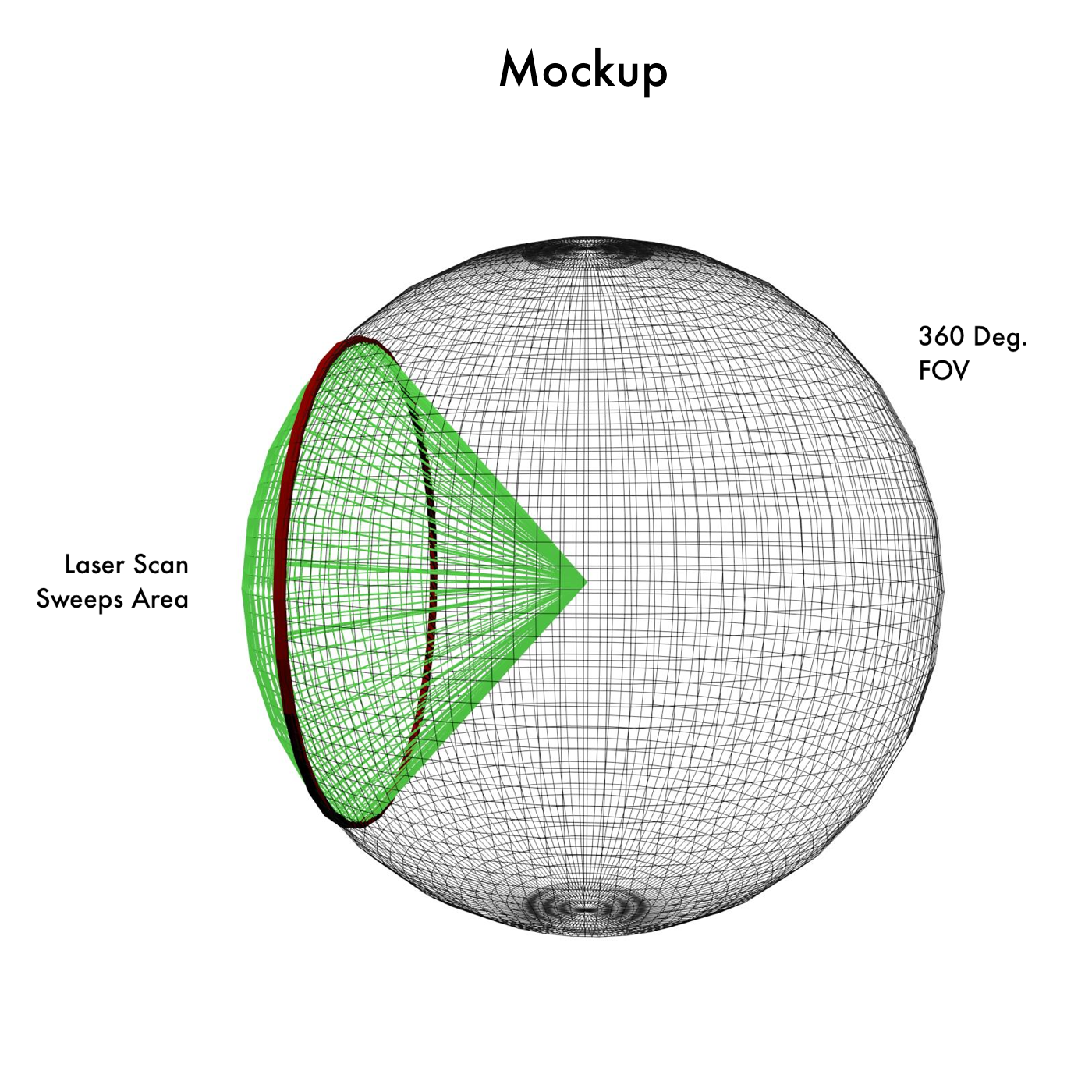

We propose a similar technique for producing "volumetric" and photogrammetric images in 360 degrees. We mount a laser scanner and 360 degree camera at the same location, such that they have the same field of vision. We can then create workflows for processing and calibrating these images, and tools for other artists to use in a game engine such as Unity.

Currently, all-in-one cameras cost in the range of $16,000. By using cheaper alternatives and creating accessible tools, we can expect to produce images and depth maps like this at a fraction of the cost: